TLDR:

- Every fraud and AML vendor claims unified data, but when an AI agent queries the platform, the token cost reveals the architecture underneath

- One API call to one data layer means AI spends its budget on analysis

- Multiple calls routed through an orchestration layer means it spends its budget on logistics

- The output looks the same. What it missed doesn’t. A unified data layer means your AI gets the full picture in one query and spends tokens on actual fraud detection work

Teams across fraud and anti-money laundering (AML) are adopting AI faster than any other technology shift in history, and its impact is exponential. Most analysts who spend time in transaction queues and investigation workflows have found the tools on their own, with the understanding that AI could help them parse patterns, test hypotheses and work through complex fraud schemes faster and more efficiently.

They were right. But the efficiency gains aren’t showing up. The analysts who were supposed to spend less time on repetitive work are spending the same amount of time, just in different places. The 2026 Fraud and AML Leaders Report confirmed what most teams already feel: budgets are still growing, headcount demands keep climbing and AI adoption hasn’t reduced operational complexity.

The usual explanation is that AI needs better prompts, more training data and more time to mature, but the real explanation is simpler. Your team’s AI tools are working. The problem is what they’re actually working on.

Your AI has a Budget

Every AI model operates within a finite processing limit called a context window. Think of it as working memory. Everything the AI reads, holds, processes and responds to costs tokens. Tokens are the unit of work, and tokens cost money. When the AI runs out of budget mid-task, the task stops. The investigation doesn’t finish. The briefing doesn’t get generated. The analyst either waits, retries with less data or abandons the query and goes back to doing the work manually.

For enterprise teams, while token limits aren’t capped, every token consumed shows up on your invoice. The more data assembly the AI has to do before it can start analyzing, the higher that bill climbs and the less analysis you get per dollar.

For fraud and AML work, the implication is direct. If the AI spends most of its working memory gathering data from different sources, translating between formats and reconciling conflicting structures, it has less capacity left for the actual work: comparing transaction patterns, identifying connected accounts and spotting the behavioral signals that distinguish a fraud ring from normal activity.

The output looks complete and reads like a real analysis. Still, the signals that require cross-referencing across fraud, AML and device data are precisely the ones that get dropped when the budget runs out before the AI reaches them — and nobody notices what AI didn’t catch.

Two Ways Your AI Is Burning Through Its Budget

Not every team uses AI in the same way, but every approach bears the same hidden costs:

1. The Copy-Paste Workflow

Your analysts are copying transaction records, customer data and risk signals into ChatGPT or Claude, and they’re doing it because the tools are good and the instinct is right. But every copy-paste strips context, which, in turn, breaks chronology. The connections to related accounts are lost, leaving the AI with a puzzle with many pieces missing.

We wrote about this problem in detail in the article, Your Analysts Are Already Using AI. Here’s What They’re Missing. The short version: when a fraud analyst manually feeds 15 data points into an AI tool, but the full picture requires 900+ behavioral signals, the AI’s answer will feel reasonable. It just won’t be complete. The token cost here is brutal. The analyst is spending their time doing work the AI should be doing (assembling context to fill in gaps), and the AI is spending its budget on fragments that don’t connect. Both sides lose.

2. When Separate Systems Pretend to Be One Unified System

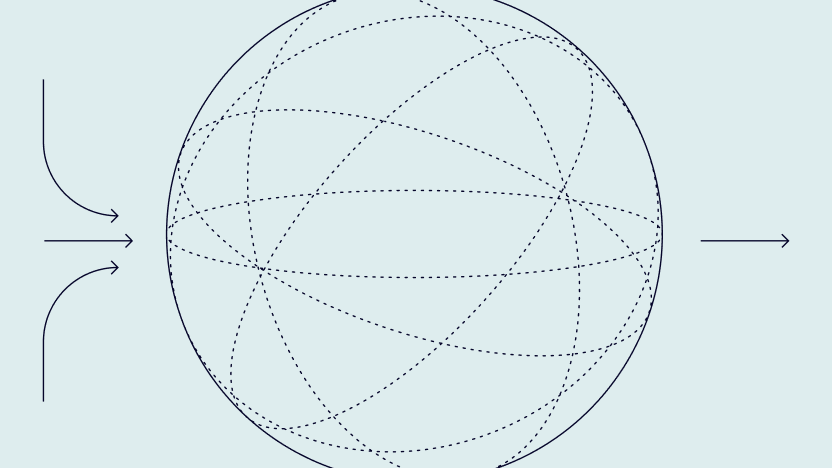

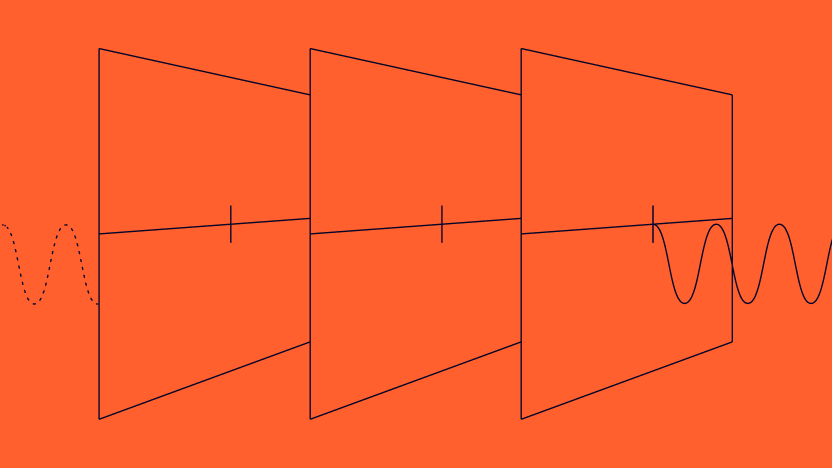

Here’s where the cost becomes invisible. A growing number of fraud and AML platforms claim to offer everything in one place. The dashboard looks unified. The analyst experience feels like a single product. But underneath, the AI is making multiple API calls to multiple services (A transaction monitoring tool acquired two years ago, a device intelligence provider bolted on last year, an AML screening engine white-labeled from a third party). Each maintains its own data model, schema and response format.

The analyst doesn’t see the seams, but AI does. Every cross-signal query that should be one trip becomes five. The AI waits for the slowest service to respond, reconciles the different data structures and only then begins to reason. The working memory that should have been devoted to analysis was diverted to logistics.

While the output still looks like a coherent briefing, four accounts sharing a device fingerprint with a flagged AML alert, a pattern that only becomes visible when device signals and transaction history occupy the same space in the AI’s working memory, quietly gets dropped. The token budget ran out before the AI got there.

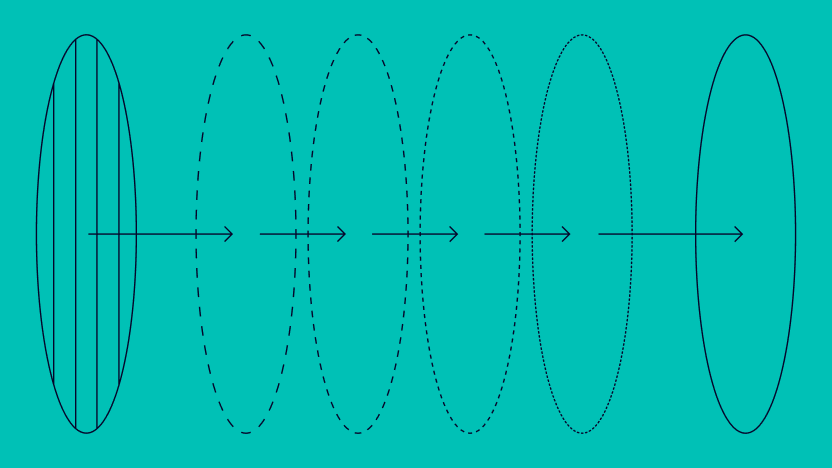

The common response is that context windows are getting bigger and AI models are expanding their capacity. Won’t the constraint just disappear with a million-token context?

The answer is no, and research explains why.

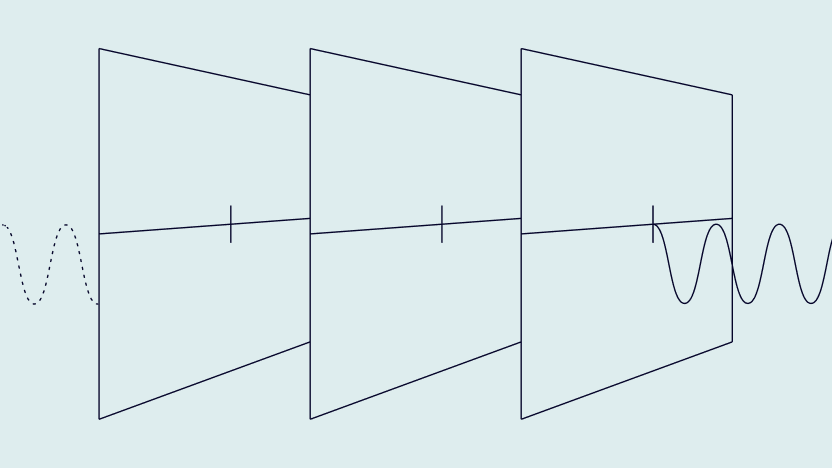

Researchers documented a pattern in how AI models process long inputs. Models attend well to information at the beginning and end of the context window but miss signals buried in the middle. For example, Chroma Research tested 18 leading models in 2025 and found that accuracy dropped significantly as the input size increased from 10,000 to 100,000 tokens. Every model they tested showed degradation, which they coined as context rot.

For fraud and AML work, the practical problem is that the signals that require cross-system reasoning, such as a device flag linked to an AML alert linked to a high-risk transaction, are exactly the signals most likely to land in the middle of a large, assembled context. Bigger windows give you more room, but they don’t change the ratio of logistics to analysis — and they don’t fix the accuracy problem in the middle of that window. If most of a small budget goes to data assembly, most of a larger budget does too. The gap between efficient and inefficient architecture doesn’t close as models scale. It widens.

What Token-Efficient Fraud Prevention AI Actually Looks Like

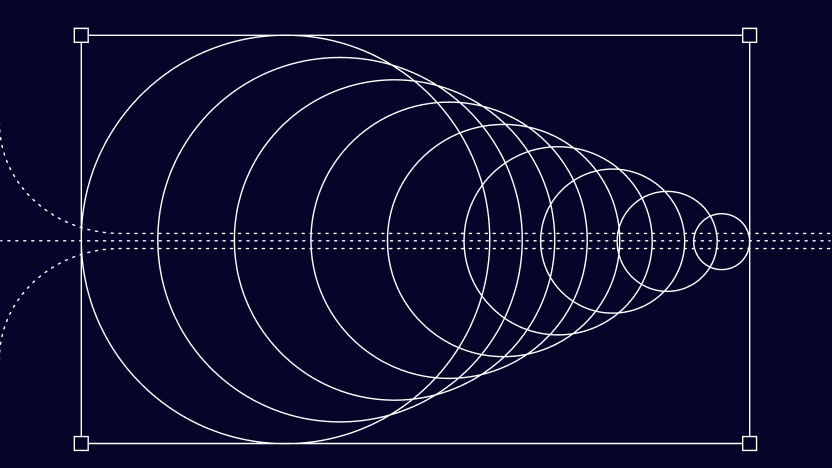

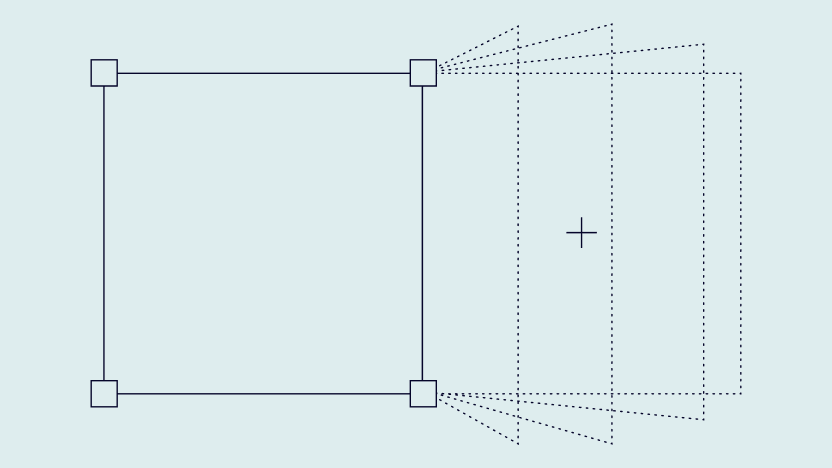

Strip away the jargon, and the answer to token efficiency is mechanical. When all fraud and AML signals live in a single queryable data layer, AI makes one call. One trip. One schema. One response. The agent spends its entire working memory on the real work: comparing 30-day transaction trends against dynamic baselines, identifying connected accounts through shared device fingerprints and cross-referencing AML alerts with behavioral anomalies in a single pass.

When the platform orchestrates across multiple services behind a unified-looking interface, the agent makes multiple trips. If your team’s AI consumes thousands of dollars in tokens annually, how much of that spend goes to analysis and how much goes to reassembling a picture that a unified data layer would have ready before the first token was spent?

Four Questions Worth Asking About Your Own Setup

Every vendor will show you a clean dashboard and trot out the pledge of unification. Their demo will look convincing because demos are designed to, but here are the four questions really worth asking to understand what separates the architecture from just marketing-speak:

If the answer involves AI browser navigation, sequential API calls per signal type, manual data exports or copy-paste before AI can begin, the budget is bleeding before analysis starts. The cost is invisible in the output but shows up in everything the output fails to catch, and when the budget runs dry before the workday ends.

A setup that stores fraud signals and AML signals in separate systems forces the AI to reconcile them before it can reason across them. The signals that only exist at the intersection, a high-risk transaction linked to an AML-flagged account via a shared device fingerprint, are the ones most likely to be missed.

If the platform resolves a cross-signal query by fanning out requests to multiple underlying services, whether those are internal microservices or third-party providers acquired over time, the unification is cosmetic. The AI still pays the token cost of multiple round-trips and multiple reconciliation steps. The dashboard may look like one product, but the AI knows it isn’t.

Most teams can’t answer this. Not knowing is itself a signal. If you can’t get a clear answer from your vendor or your own engineering team, the budget is probably being spent on logistics nobody has been measuring.

These questions don’t require a technical audit, but they do require honest conversations. The answers will tell you whether your AI’s context budget is being spent on analysis — or on the logistics of assembling a picture that a unified architecture would have ready before the first token is spent.

Speak with an Expert