The landscape of fraud is shifting at breakneck speed. As financial institutions and e-commerce giants grapple with increasingly sophisticated attacks, machine learning (ML) has moved from a “nice-to-have” to the frontline of defense.

The results are tangible. According to SEON’s 2026 Fraud & AML Leaders Report, 98% of fraud and AML leaders already integrate ML into daily workflows, with 95% expressing confidence in its ability to detect and prevent fraud. Yet only 47% of organizations run fully integrated workflows, proving that adoption alone isn’t enough.

In this guide, we explore the mechanics of machine learning, the critical difference between Blackbox and Whitebox systems and how businesses can leverage these algorithms to protect their bottom line.

Key Takeaways

- Machine learning analyzes transactions in real time flagging suspicious patterns before losses occur, essential as fraud threats grow faster than defenses can keep up.

- Supervised and unsupervised models catch both known and emerging threats, yet only 47% of organizations run fully integrated fraud and AML workflows leaving dangerous blind spots.

- According to SEON’s 2026 Fraud & AML Leaders Report, 80% of fraud leaders struggle to achieve a unified data view, proving detection accuracy alone isn’t enough without the right architecture.

- AI is redefining fraud teams not replacing them, with 94% of leaders planning to add headcount in 2026 up from 88% the prior year as operational complexity outpaces automation gains.

What is Fraud Detection with Machine Learning?

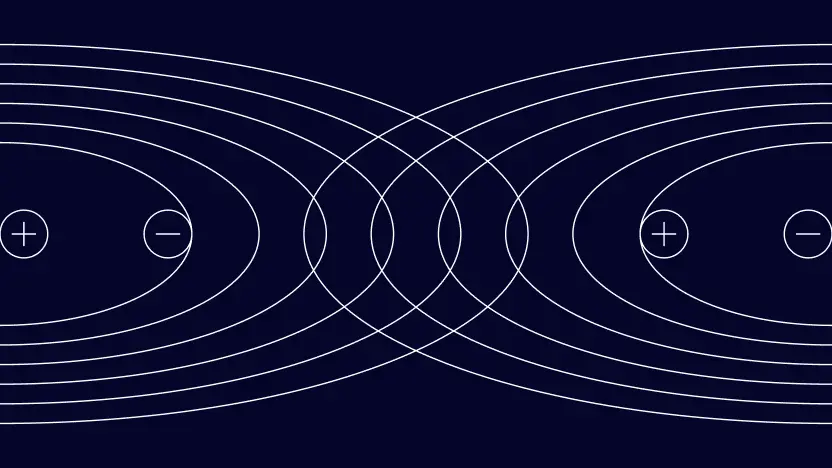

Fraud detection with machine learning is the use of automated algorithms to analyze large datasets and identify suspicious activity in real time. These systems process transaction data and behavioral signals to establish a baseline of normal behavior. The model then flags deviations that suggest fraud before losses occur.

Machine learning models stand apart from traditional rules-based legacy systems. Rules-based systems are static and can be easily learned and avoided by criminals. As fraudsters change their tactics, rules must be manually updated. This is a highly time-consuming task.

Meanwhile, machine learning can quickly adapt to new fraud patterns by identifying both known and emerging threats. This includes spotting unusual spending amounts or frequent transactions in high-risk locations. A machine learning model also improves through a feedback loop where analysts provide insights into model performance.

How Machine Learning Prevents & Detects Fraud

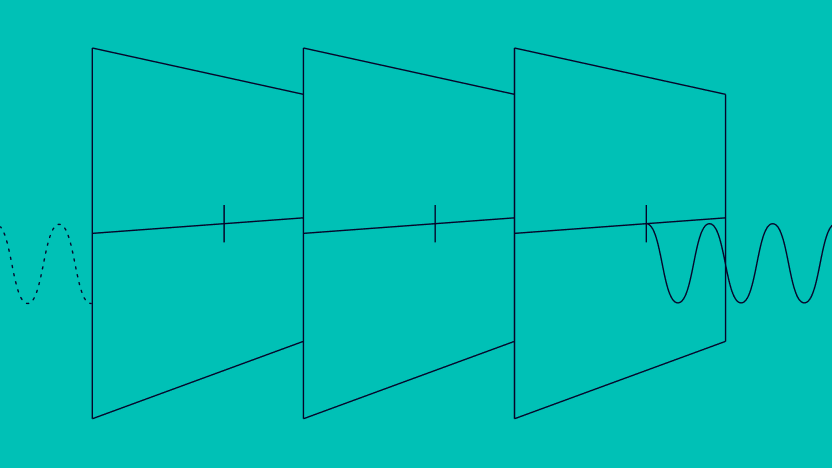

The learning process for advanced machine learning models typically involves two methodologies: supervised and unsupervised learning.

Supervised Learning

The system learns by reviewing millions of records already labeled as fraudulent or typical. The model then classifies new fraud based on these previously observed patterns.

Unsupervised Learning

This allows models to explore data without prior labels or knowledge. The system decides on its own what looks ordinary and what looks unusual. This is beneficial for catching “known unknowns” and emerging fraud types that have not yet been detected. You can read more about SEON’s approach to early fraud detection in SEON’s AI Perspective: The Next Era of Fraud Prevention.

Machine learning uses these methods to deliver:

- Real-Time Risk Assessment: Algorithms process data the moment it arrives. This empowers banks to take swift action.

- Behavioral Biometrics: Models analyze typing rhythms, mouse movements and spending habits. This delivers passive authentication without introducing customer friction.

- Continuous Adaptation: Self-optimizing technologies automatically retrain models. This ensures your defense remains robust against the latest tricks employed by fraudsters.

The Benefits of Machine Learning vs Traditional Fraud Detection

Machine learning allows your team to do in milliseconds what an analyst team cannot do in a full shift.

| Benefit | Machine Learning | Traditional Rules |

|---|---|---|

| Detection Speed | Real-time instant alerts as transactions occur | Often delayed and batch-based |

| Accuracy | High, adapts rapidly to known and emerging threats | Lower, frequently misses novel fraud and can be bypassed |

| False Positives | Low, learns normal behavior patterns over time | High, static rules block legitimate users |

| Adaptability | Self-learns from new data and analyst feedback | Relies on manual updates and constant rule maintenance |

| Regulatory Compliance | Built-in audit trails support model risk frameworks | Retrospective reconstruction creates compliance gaps |

See how SEON’s adaptive ML surfaces the reasoning behind every risk score so teams can audit and defend outcomes in real time.

Speak with an expert

How Businesses Are Using Machine Learning for Fraud Detection

Machine learning for fraud detection is industry-agnostic. Following SEON’s 2026 Fraud & AML Leaders Report, adoption spans payments, fintech, retail, eCommerce and betting and gaming, with 98% of fraud leaders already integrating AI into daily workflows across these sectors.

- Financial institutions and Fintech: Account takeover is the top threat reported by fraud leaders at 26%, followed by promotion abuse at 18%. The challenge for financial institutions is that credential-based attacks move fast and look legitimate until they don’t. ML models build behavioral profiles for each user so that even when login credentials are valid, anomalies in device, location or session behavior trigger a review before any damage is done.

- Retail and eCommerce: Return fraud and chargebacks account for 18% and 16% of reported threats respectively, making disputed transaction behavior one of the costliest problems retailers face. ML systems learn the difference between a genuine refund pattern and an abusive one by analyzing transaction history, account age and behavioral signals together, catching abuse that simple rules would approve without hesitation.

- Betting and Gaming: Loyalty and rewards program abuse accounts for 13% of reported threats in this sector. Fraudsters exploit bonus structures at scale using bots and synthetic accounts, making volume and velocity the key signals to watch. Machine learning identifies unusual redemption patterns and clusters of coordinated activity across high-value player accounts that a rules-based system would treat as isolated events.

- Payments and BNPL: Alternative payment abuse including crypto accounts for 6% of reported threats and is growing. In BNPL specifically, the window between application and first repayment is where synthetic identity fraud is most damaging. ML models analyze login behavior, transaction velocity and device signals together to catch fraudulent applications before credit is extended.

5 Steps to Implementing Machine Learning for Fraud Prevention

Most organizations already have the data they need to fight fraud. The gap is in how that data gets structured, labeled and fed into a system that learns from it. Here is how to turn historical transaction data into a proactive defense.

1. Define Your Data Inputs

The model needs clean labeled transaction data covering transaction values, device fingerprints, IP reputation and behavioral signals. The richer and fresher the data the more accurate the outputs. Crucially, labeling must cover both positive and negative outcomes and not just confirmed fraud cases. Since fraudulent events are naturally rarer than legitimate ones, models trained only on negative signals develop a skewed picture of your risk profile. Labeling good transactions alongside bad ones gives the algorithm a complete baseline to learn from.

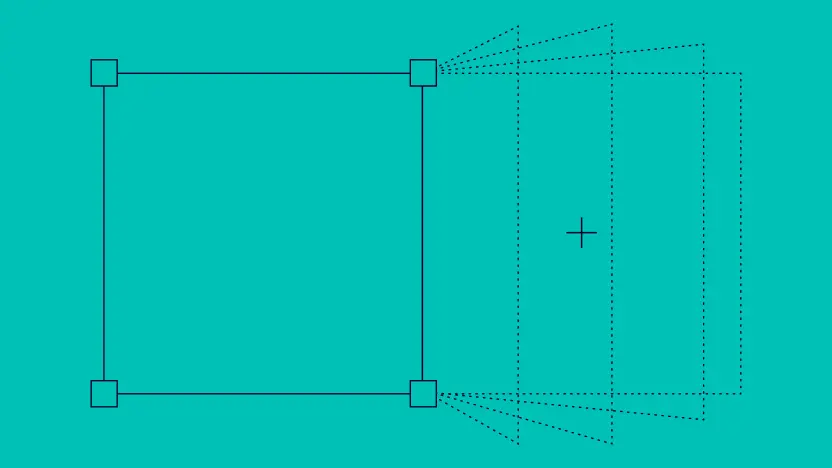

2. Test Against Historical Transactions

Before going live, run your rules and models against past transactions in a sandbox environment. This reveals how accurately the system would have performed over any given time period without exposing real users to risk. Each rule suggestion should come with a predicted accuracy score and an estimate of transaction impact, so analysts can see what they are activating before it touches live traffic. This step surfaces gaps in your ruleset and reduces the chance of deploying logic that generates false positives at scale.

3. Generate and Score Rules

Once backtesting is complete, the engine identifies both single-parameter heuristic rules and complex multi-parameter logic based on patterns in your data. The strongest systems go further by surfacing AI rule suggestions automatically, flagging emerging risk patterns as they appear and recommending new rules tailored to evolving fraud tactics. Each suggestion comes with a predicted accuracy and transaction impact estimate, so analysts can prioritize what matters most rather than chasing noise.

4. Review and Validate Before Activation

No rule should go live without human sign-off. Analysts review suggestions against predicted outcomes and activate only what meets the required accuracy threshold, keeping humans in control of every decision. This is where explainability becomes non-negotiable. If analysts cannot see which signals drove a risk score, they cannot confidently approve or reject a rule. Transparent, whitebox decisioning at this stage reduces misinterpretation and builds the kind of audit trail regulators expect.

5. Feed Results Back Into the Model

Ongoing feedback is what separates a static system from an adaptive one. Labeling outcomes as fraud, legitimate or review trains the model continuously, making it smarter with every decision it processes. The most effective approach is continuous, right-in-time labeling, feeding verified outcomes back into the system as soon as your team has confirmed them rather than batching labels weekly or monthly. Precise labels matter too: marking a case as bonus abuse rather than generic fraud gives the model the specificity it needs to build targeted detection logic for that threat type going forward.

How SEON Uses Machine Learning Against Fraud and Money Laundering

Most machine learning platforms feed opaque models with third-party data, leaving analysts with a score but no explanation. SEON uses real-time first-party risk indicators and consortium data to power transparent explainable decisioning that regulators, reviewers and auditors can actually interrogate. The platform combines 900+ fraud signals with AI-driven rule suggestions, automatically identifying emerging risk patterns and recommending new rules based on how they would perform against real transaction data.

Analysts see exactly which signals influenced each decision, reducing misinterpretation and accelerating reviews. Where machines handle pattern detection at scale humans retain full oversight of the decisions that matter most, with AI summaries and post-decision automations connecting every step into a single auditable workflow.

FAQ About Machine Learning for Fraud Detection

ML detects risk automatically based on your historical data. It reduces the time spent on manual reviews and identifies patterns that are invisible to the human eye

Traditional rules rely on static logic that fraudsters bypass easily. Machine learning uses adaptive algorithms to analyze complex data patterns in real time. This identifies risk through behavioral context rather than tripping on single variables.

Yes, machine learning detects fraud in real time by analyzing first-party risk indicators to uncover suspicious patterns instantly. SEON utilizes adaptive AI and 900+ fraud signals to block evolving threats before losses occur while providing explainable insights.

GenAI helps analysts build rules, generate summaries and describe suspicious behavior in plain English, but it cannot process real-time transactional decisions at millisecond speed. Effective fraud detection combines both. Read more in SEON’s AI Perspective: The Next Era of Fraud Prevention.

You might also be interested in reading about:

- SEON: The 6 Best Transaction Monitoring Software & Tools

- SEON: Guide to Fraud Scoring: What Is It and How Does It Work?